Have you noticed the video surveillance cameras in retail shops watching your every move? In many cases, there are one or two security guards monitoring the video feed to catch shoplifters. Since they can’t track the movement of every shopper who sets foot in the shop all the time, it’s left to the judgment of security guards to decide who could be a potential shoplifter.

And often, this belief will be influenced by their subconscious biases about race, gender and appearance. As a result, they can miss shoplifters that do not match the profile they are tracking — and innocent people get tracked for no reason at all except for biases.

Several companies in Seattle and elsewhere are developing technologies such as computer vision and Artificial Intelligence (AI) which they say can remove biases that are a part of human nature.

For instance, AI can be trained to track the movement of the product rather than the person, which helps in catching shoplifters, irrespective of their race or appearance. This is the technology that powers cashier-less stores including Amazon Go.

“Whenever a judgment is required in a complex situation, AI has the potential to provide a wealth of context and supplemental information that can lead to a solid and unbiased decision,” said Dr. Oren Etzioni, a senior researcher in the AI field.

Etzioni is with downtown Seattle-based Allen Institute of Artificial Intelligence, or Ai2, which focuses on conducting high-impact research and engineering to advance the field of artificial intelligence, all for the common good. It was founded by late Microsoft co-founder Paul Allen in 2014.

The potential to help humans make decisions based on data and not on biases has led businesses in Seattle to develop Artificial Intelligence and its associated technologies, including computer vision, natural language processing and machine learning. Seattle is a growing hub for AI-powered businesses – a search for AI and Seattle on Glassdoor shows over 5,500 results.

No surprise then that several innovative companies are coming out of Seattle.

Seattle-based Mighty AI provides access to high-quality training data to companies building computer-vision based products across a variety of industries including automotive, retail and robotics.

“Computer vision is starting to spring up in all sorts of applications,” said Daryn Nakhuda, CEO of Mighty AI. “Autonomous vehicles, new retail experiences like Amazon Go, automated visual quality controls on manufacturing lines, portrait mode on your iPhone and even things like drones that can predict crop yields as they fly over agricultural grounds.”

But Nakhuda said it’s crucial that such technologies are trained on unbiased, diverse data that reflects the real world.

“However, AI is only as good as the data that it is based on. Feed it biased or non-diverse data, and you’ll have a biased system.”

A lot of times, this bias comes from overfitting a small data set to unrelated scenarios.

In their work with the autonomous vehicles industry, Mighty AI sees the lack of diversity in data show up in terms of different road objects, weather conditions, vehicle types, lighting conditions, etc.

“If you have cars on the road in San Francisco, Phoenix, Boston, and Detroit, and you’re only collecting and labeling data from those geographies but plan to deploy a fleet of vehicles in places like Tokyo or Berlin without also collecting data from those geographies, you’ve got a problem,” Nakhuda said.

Another big challenge in delivering AI-based solutions is managing the fear that it will take away human jobs. Eightfold.ai is a San Francisco-based company that aims to improve business hiring processes with the help of AI.

Eightfold analyzes a company’s recruitment process with the help of machine learning to uncover hidden biases in the system. For instance, they observed that in many companies, out of a pool of applicants for a job, male applicants had a fifty percent higher chance of being called for an interview as compared to the female applicants. With the help of AI, they could mask out details that are not critical to job performance, like gender, age, race, etc.

“So we said, ‘can we anonymize everything?,'” Eightfold CEO Ashutosh Garg said. “‘Can we mask the name of the applicant so that they don’t know the gender?’ ‘Can we mask the age of the person?’ After that, we have seen that the bias goes away dramatically.”

Anonymizing candidates for an interview is not a new idea, Garg said. In 1952, the Boston Symphony experimented with holding auditions behind a curtain and increased the number of women musicians selected for its symphony — revealing the unconscious bias toward men of those holding the auditions.

Some subconscious biases are not that obvious. It is common for recruiters to scan profiles on Linkedin to find talent. Garg pointed out that LinkedIn is a social network, and even at a professional level, it perpetuates biases or social relationships.

“Most of my first-and second-degree connections on LinkedIn are from my educational and professional background. As a result, a lot of them are Indian software engineers who are males,” Garg said. “When I search for a software engineer on LinkedIn, it is very likely that I will see more Indian males than other people. This could lead to a subconscious bias that software engineers would mostly be Indian males, even though that is not the case.”

Not all biases are offshoots of the subconscious. Sometimes lack of knowledge can lead to bias as well. For example, a company might prefer candidates from Stanford University or the University of California, Berkeley. However, they may not know about universities in other parts of the world, like Fudan University in China or BITS Pilani in India which also have high-performing alumni at top global companies. In this case, a human lack of knowledge results in limiting the pool of candidates. Unlike a human, AI has the capacity to scan millions of profiles across different parameters to generate a data set that meets the recruiters’ needs.

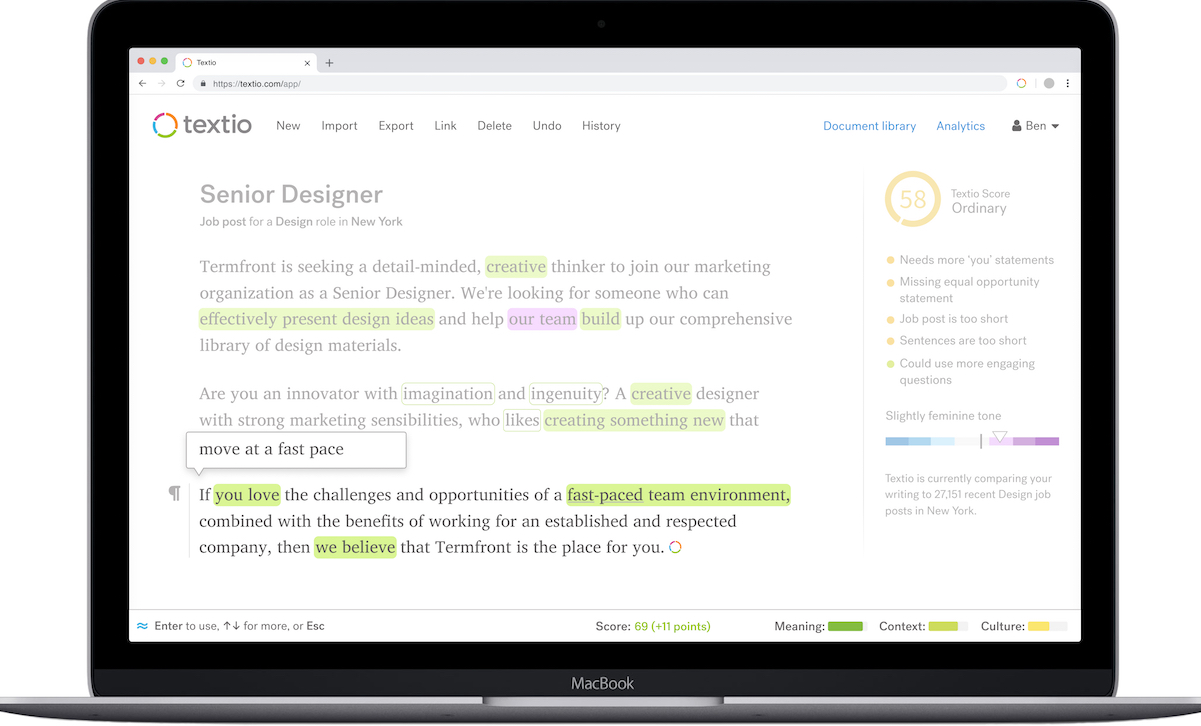

Another way that technology can help close hiring gaps is to use AI to analyze job descriptions to find patterns of bias.

This application of AI is called Augmented Writing, which was developed by Seattle-based Textio and powers their tool TextioHire. This tool helps companies eliminate unconscious bias from job descriptions by identifying language that has shown to discourage women and minorities from applying. The software also suggests less-biased alternatives.

TextioHire has been credited with helping companies like Expedia, Zillow and Cisco improve diversity within their workforces, said Charna Parkey, VP of Customer Success at Textio.

According to Cisco’s latest diversity report, Cisco is now the most diverse it has been since 2000, with almost a quarter of its global workforce made up of women, and half of its US employees identifying as nonwhite or non-caucasian. In an interview with CNBC, a Cisco spokesperson said that Textio has helped them increase their female job candidates by 10% and also enabled them to fill positions faster by making sure their job descriptions encourage diverse applicants.

Despite the promise of computers aiding humans to eliminate bias, AI technologies are relatively new and there are undiscovered risks. Last year, the American Civil Liberties Union (ACLU) called for a moratorium on all governmental use of facial recognition technologies.

Technology companies are trying to respond to this concern by creating ethics around AI, including The Partnership on AI.

Nakhuda said that it’s important for the industry to start addressing the ethical concerns of the general public.

“In order to gain consumer trust, people are going to want to know exactly how a particular system works,” said Nakhuda, of Mighty AI. “Deep learning systems, in particular, are very difficult to explain, especially when it comes down to specific inferences.”

As AI-powered solutions grow all around us, it will be crucial to develop a set of rules that govern usage and ensure that the values of humanity precede the applications.

Etzioni spells out three fundamental principles that will help guide the safe, effective and beneficial development of AI technologies:

First, AI technology must respect the same laws that apply to its creators and operators. Just as a car should not be used as a getaway vehicle in a bank robbery, AI should not be used to facilitate fraud, violate treaties, or commit other crimes.

Second, regulations for AI should address the individual or group that is deploying the AI technology, not the underlying AI technology itself. For example, a self-driving car may contain AI technology such as machine vision algorithms, general algorithms for collision avoidance, and other general software. If a fault in the underlying software causes an accident, the car manufacturer or driver is responsible, not the software.

Third, creators and operators must specify when an interactive agent is an AI technology or a human. This means that bots, computer programs, videos, etc. that engage in communication with a person must clearly identify themselves as AI.

However, these industry efforts to build ethics have not been coordinated across groups and there are no commonly understood or adopted standards or regulations. Furthermore, regulatory bodies and most governments are not prepared or educated on the implications of AI technology to regulate it effectively.

Time will tell what kinds of ethical standards on artificial intelligence the technology industry eventually sets, or if governments will have to step in to create limits where the industry can’t — or won’t.

Correction: In an earlier version of this story, Mighty AI was identified by its URL instead of its company name. This has been corrected.

This story was written as part of The Seattle Globalist’s 2019 Emerging Tech Fellowship, in partnership with the University of Washington’s Communication Leadership master’s program.